Your course library is growing. Your enablement decks are longer. Your FAQs now have FAQs.

And the skills gap is still there.

That is not a rare situation. The pace of skill disruption is real. In its Future of Jobs Survey results, World Economic Forum reports that employers expect 39% of workers’ core skills to change by 2030. That kind of churn creates pressure to do something visible and fast.

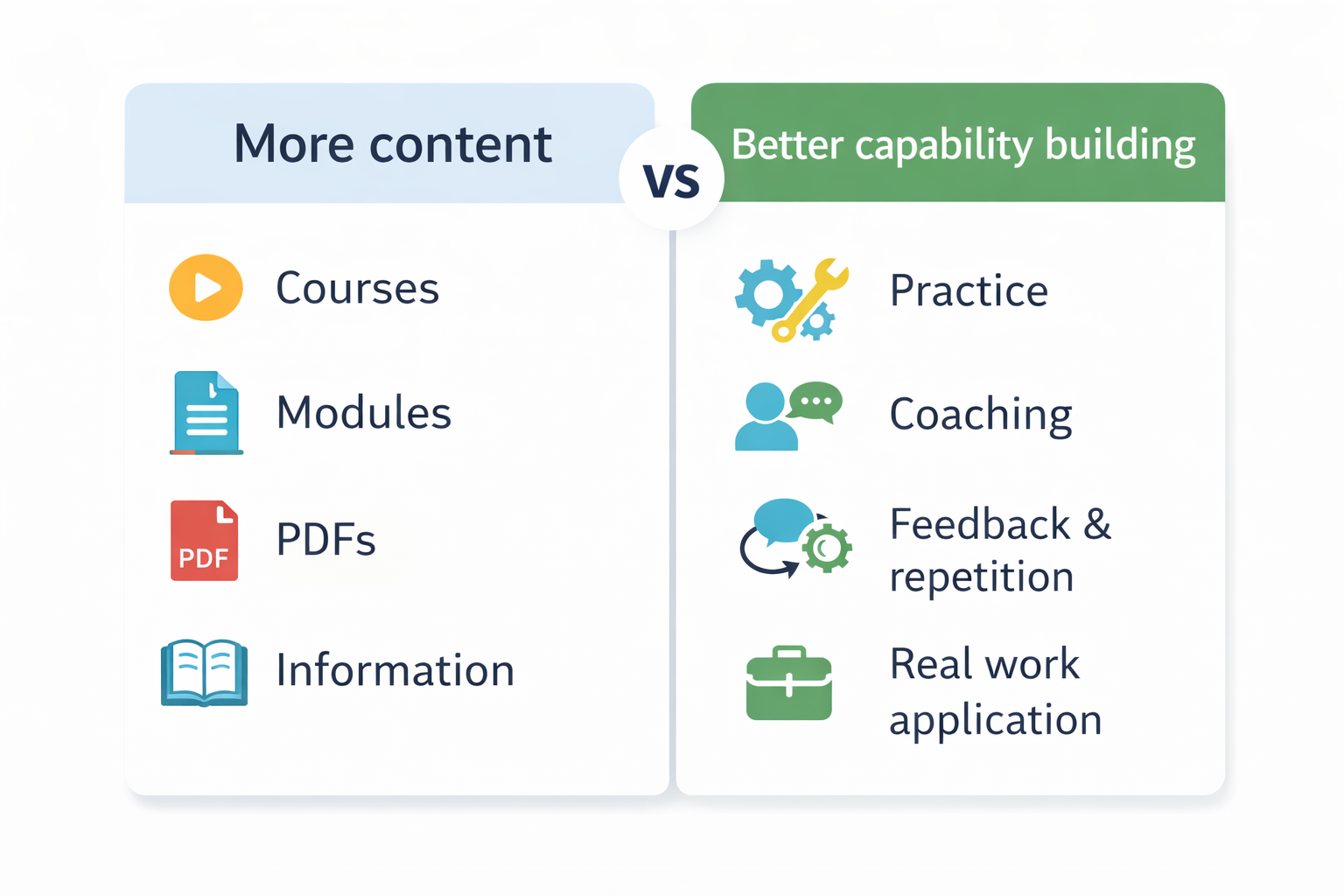

More content is the fastest visible answer. It is also the most common wrong answer.

Content is input. A skills gap is an output problem. When the gap shows up in meetings, in customer calls, in escalations, and in quality metrics, the fix is often treated like a publishing problem. Add a module. Add a lesson. Add a video. Add a slide deck.

But cognitive science has been warning us about this instinct for decades. The conditions that create fast gains in the moment are not always the conditions that create durable learning that lasts and transfers.

A skills gap is not “people have not seen the content.”

A skills gap is “people cannot do the task when it counts.”

Research on training transfer makes that distinction explicit. Training only matters if what is learned generalizes to the job context and is maintained over time. If performance does not change where work happens, the organization does not have a learning problem. It has a transfer problem.

That is why adding more content often disappoints. A classic transfer framework identifies three major buckets that influence whether training turns into job performance: trainee characteristics, training design, and the work environment. A later synthesis aimed at practitioners highlights consistent predictors of training transfer that include design choices such as realistic training environments and work environment factors such as transfer climate, support, opportunity to perform, and follow up.

So before you add content, it helps to run a basic test:

If someone had perfect knowledge, could they do the job anyway?

If the answer is no, the bottleneck is not missing information. It is usually one of these:

There are three evidence backed reasons content expansions tend to underperform.

First, more content increases load faster than it increases skill.

Cognitive load theory focuses on how learning is constrained by cognitive architecture. One summary of the field notes that working memory, where conscious processing occurs, can handle only a very limited number of interacting elements, and that unnecessary load can interfere with schema acquisition and automation. When you respond to a skills gap by increasing the amount of information people must process, you can end up adding exactly the kind of load that suppresses learning.

This is not just theory. Even in early formulations, cognitive load work suggested that when problem solving consumes a large amount of cognitive processing capacity, less capacity is available for schema acquisition. If your training solution forces people to juggle too many novel elements at once, the learner can feel busy without becoming capable.

Second, more content often pushes people toward low value study behaviors.

A major review of learning techniques found that practice testing and distributed practice received high utility assessments, while strategies many people use by default, such as rereading and highlighting, were rated as low utility for improving performance. If the fix for a skills gap is “give them more material,” learners often respond with the easiest consumption behaviors. That can create familiarity without competence.

Third, improving performance feels less efficient than adding content, so people misjudge what works.

In one study on concept learning, participants rated massed study as more effective than spaced study even after their own test performance showed the opposite pattern. That is a recurring theme in learning science. Some conditions inflate confidence in the short term while producing weaker long term retention.

In practice, this is how many content heavy training programs fail:

They optimize for ease of delivery and ease of consumption.

But durable learning often requires activities that feel harder in the moment, like retrieval practice, spacing, and varied practice conditions.

Closing a skills gap is less like publishing and more like building competence under constraints. The research points to a small set of levers that show up again and again.

Build practice, not just exposure.

Practice is not repetition of slides. Practice is repeated performance of the target behavior with feedback. The most effective learning conditions identified in the learning techniques review emphasize practice testing and practice distributed over time.

Use retrieval as a learning event.

A review of the testing effect summarizes a key finding: being tested on material can enhance later retention more than additional study, even when tests are given without feedback.

That matters for workplace training because many job tasks are retrieval tasks. People must recall what to do, in what order, under time pressure. Training that forces retrieval during learning is closer to the performance requirement.

Space practice over time and across contexts.

A quantitative synthesis of distributed practice analyzed 839 assessments of distributed practice across 317 experiments located in 184 articles. The point for practitioners is not to memorize the details. The point is that spacing effects are not a niche trick. They are supported across a very large research base.

Similarly, research on desirable difficulties emphasizes spacing and varying conditions of practice because such conditions support more durable and flexible learning, rather than learning that works only in the original training context.

Manage cognitive load through design, not willpower.

Cognitive load work is clear that learning can be suppressed by unnecessary load. One instructional design discussion notes that the timing of essential information can be critical, and that inappropriate timing can unnecessarily increase load.

For training design, that often means:

Give the big picture before the complex task begins, so learners can build a schema.

Deliver procedural details at the moment they are needed, not all upfront.

Reduce distractions and redundancy that force learners to search for what matters.

Design for transfer, not completion.

If you want skill on the job, training has to connect to the job environment. Practitioner oriented transfer research, using the Baldwin and Ford model, points to the consistent role of work environment factors such as support, opportunity to perform, and follow up.

That is why a “more content” plan often fizzles after launch. The training may be fine. The environment may be unchanged.

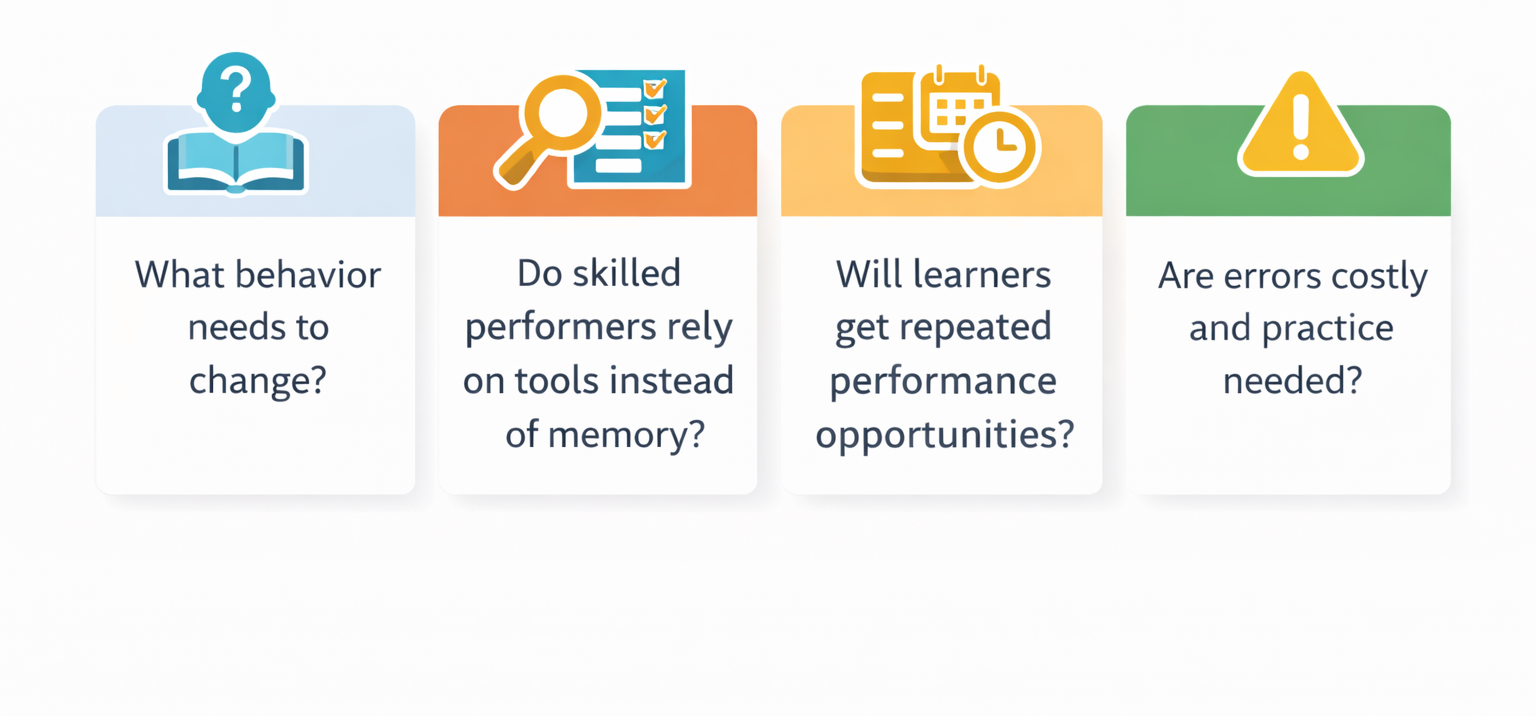

If you are being asked to add more content to “fix the skills gap,” these questions often reveal the real problem. Each aligns with what transfer research treats as the difference between learning and performance.

Can you name a single observable behavior that needs to change?

If not, the request is likely about coverage, not capability.

Can a skilled performer do the task from memory, or do they rely on tools, checklists, and job aids?

If skilled performers use support tools, you probably need better performance support, not more training content.

Will learners get repeated opportunities to perform the skill soon after training?

If not, you will need deliberate reinforcement and spaced practice, because one time exposure is not how durable learning is built.

Are errors costly enough that people need safe practice before they do it live?

If yes, you likely need scenario practice and realistic simulations more than additional information.

Is the main failure point recall, decision making, or execution under pressure?

If it is recall, retrieval practice and spaced reinforcement matter.

If it is decision making, learners need practice across varied cases, not longer explanations.

If it is execution under pressure, reduce cognitive load and practice in realistic conditions.

If “more content” is usually the wrong fix, what is the right fix?

In practice, it is a mix of experience design, practice design, and operational follow through.

On the experience and practice side, MATC’s interactive content development services explicitly covers immersive modalities such as AR and VR, including simulations and practice environments intended to support skill mastery, with examples that include leadership development and training for high risk procedures.

On the operational follow through side, MATC’s managed learning services provide end to end support for learning operations, including teams that cover instructional design, learning technology, content development, and measurement, with continuous improvement as part of the delivery model.

This is exactly what learning science tells us: capability is built through practice, spacing, feedback, cognitive load management, and reinforcement in the work system, not through bigger libraries of content.

Baldwin, Timothy T., and Ford, J. Kevin. “Transfer of Training: A Review and Directions for Future Research.” Personnel Psychology, vol. 41, 1988, pp. 63 to 105.

Bjork, Elizabeth L., and Bjork, Robert A.. “Making Things Hard on Yourself, But in a Good Way: Creating Desirable Difficulties to Enhance Learning.” Psychology and the Real World: Essays Illustrating Fundamental Contributions to Society, edited by Gernsbacher, Morton Ann and Pomerantz, James R., 2nd ed., Worth Publishing, 2014, pp. 59 to 68.

Cepeda, Nicholas J., et al. “Distributed Practice in Verbal Recall Tasks: A Review and Quantitative Synthesis.” Psychological Bulletin, vol. 132, no. 3, 2006, pp. 354 to 380.

Dunlosky, John, et al. “Improving Students’ Learning With Effective Learning Techniques: Promising Directions From Cognitive and Educational Psychology.” Psychological Science in the Public Interest, vol. 14, no. 1, 2013, pp. 4 to 58.

Grossman, Rebecca, and Salas, Eduardo. “The Transfer of Training: What Really Matters.” International Journal of Training and Development, vol. 15, no. 2, 2011, pp. 103 to 120.

Kornell, Nate, and Bjork, Robert A.. “Learning Concepts and Categories: Is Spacing the Enemy of Induction?” Psychological Science, vol. 19, no. 6, 2008, pp. 585 to 592.

Paas, Fred, Renkl, Alexander, and Sweller, John. “Cognitive Load Theory and Instructional Design: Recent Developments.” Educational Psychologist, vol. 38, no. 1, 2003, pp. 1 to 4.

Roediger, Henry L., and Karpicke, Jeffrey D.. “The Power of Testing Memory: Basic Research and Implications for Educational Practice.” Perspectives on Psychological Science, vol. 1, no. 3, 2006.

Sweller, John. “Cognitive Load During Problem Solving: Effects on Learning.” Cognitive Science, vol. 12, no. 2, 1988, pp. 257 to 285.

van Merriënboer, Jeroen J. G., and Sweller, John. “Cognitive Load Theory and Complex Learning: Recent Developments and Future Directions.” Educational Psychology Review, vol. 17, 2005, pp. 147 to 177.

“The Future of Jobs Report 2025, Skills Outlook.” World Economic Forum, 2025.